Abstract: 3D reconstruction is a beneficial technique to generate 3D geometry of scenes or objects for various applications such as computer graphics, industrial construction, and civil engineering. There are several techniques to obtain the 3D geometry of an object. Close-range photogrammetry is an inexpensive, accessible approach to obtaining high-quality object reconstruction. However, state-of-the-art software systems need a

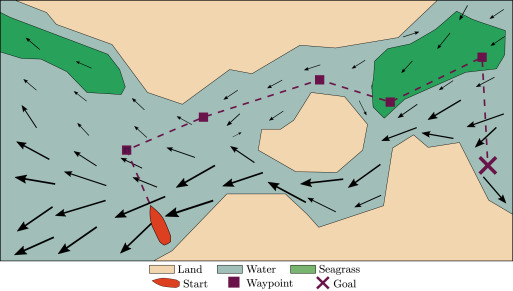

stationary scene or a controlled environment (often a turntable setup with a black background), which can be a limiting factor for object scanning. This work presents a method that reduces the need for a controlled environment and allows the capture of multiple objects with independent motion. We achieve this by creating a preprocessing pipeline that uses deep learning to transform a complex scene from an uncontrolled environment into multiple stationary

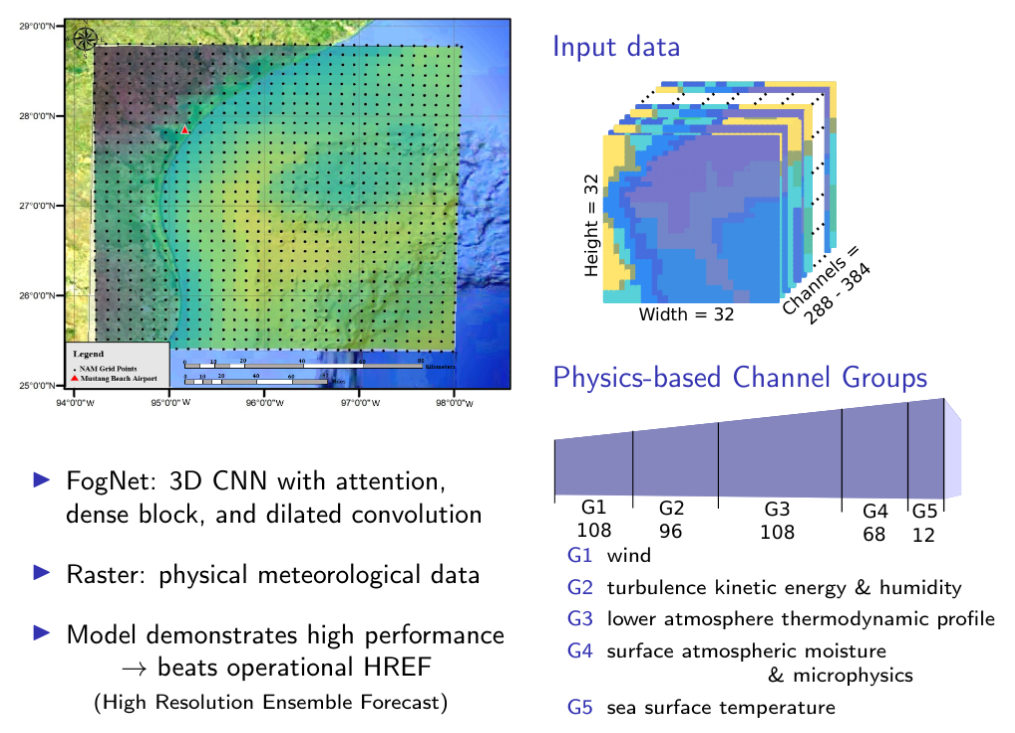

scenes with a black background that is then fed into existing software systems for reconstruction. Our pipeline achieves this by using deep learning models to detect and track objects through the scene. The detection and tracking pipeline uses semantic-based detection and tracking and supports using available pretrained or custom networks. We develop a correction mechanism to overcome some detection and tracking shortcomings, namely, object-reidentification

and multiple detections of the same object. We show detection and tracking are effective techniques to address scenes with multiple motion systems and that objects can be reconstructed with limited or no knowledge of the camera or the environment.